Unity’s Mixed and Augmented Reality Studio (MARS): Is it worth the hype?

This post was co-authored by Craig Herndon, an XR Terra instructor.

Unity’s Mixed and Augmented Reality Studio (MARS), one of this year’s most anticipated releases in the XR development ecosystem, finally launched earlier this month. For many developers, the initial sticker price of $50/month or $600/year has shifted the discussion away from a much-needed feature set to one of skepticism. The focus has turned away from “what is MARS” toward the question of “is MARS worth it”? Here at XR Terra, we spent some time with MARS and would like to provide creators and businesses with some information that will help make that decision.

To make that point, we will be covering the following features in Unity’s MARS:

- Simulation View

- Proxy-based Workflow

- Fuzzy Authoring

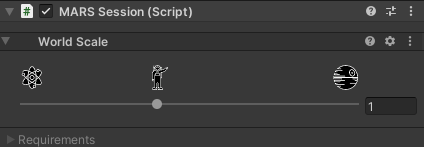

- MARS Session

Simulation View

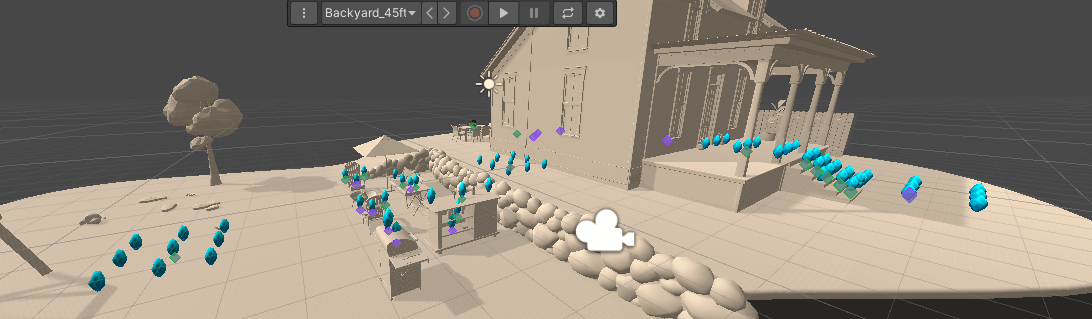

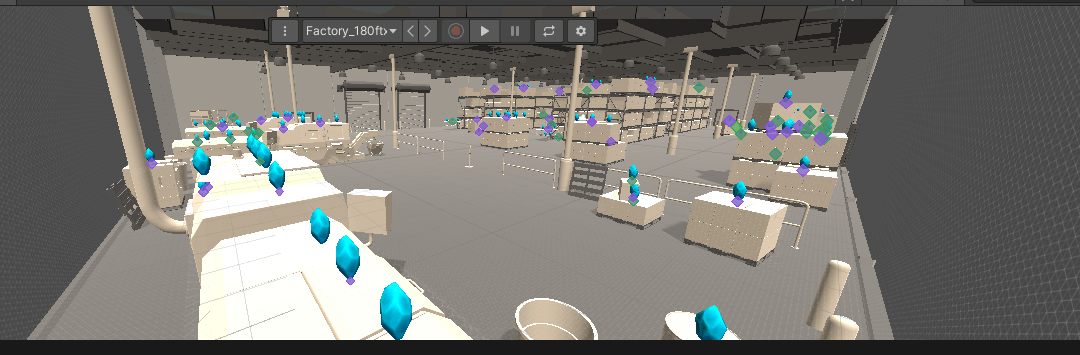

The biggest feature MARS provides is the Simulation View that comes with environment templates with the ability to test an AR application in a wide variety of settings such as a home, factory, office, or outside. You can even scan or model your own simulation environment. Before we get into how this works, let us look at the development workflow prior to MARS.

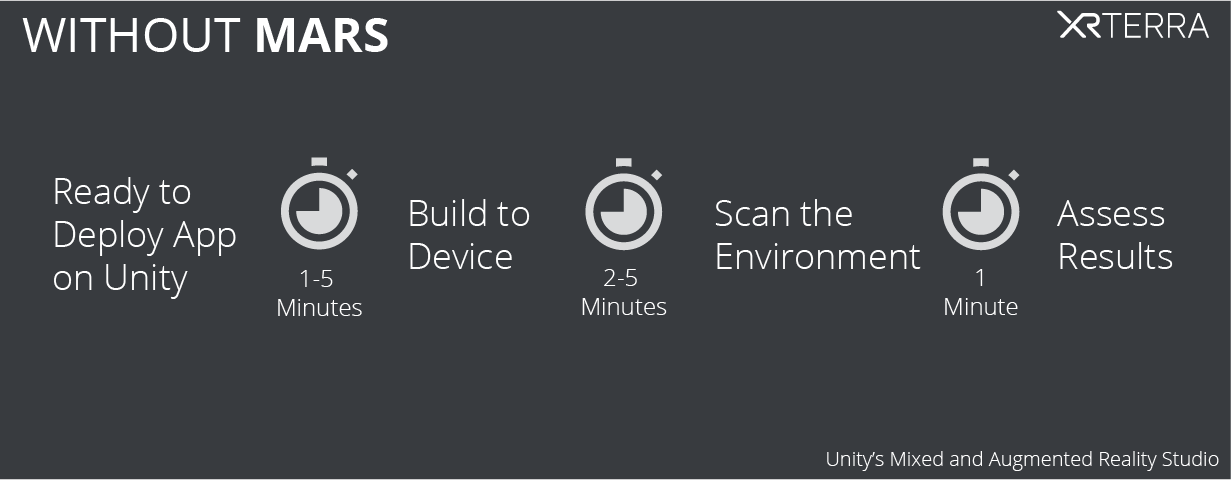

Pre-MARS Scenario:

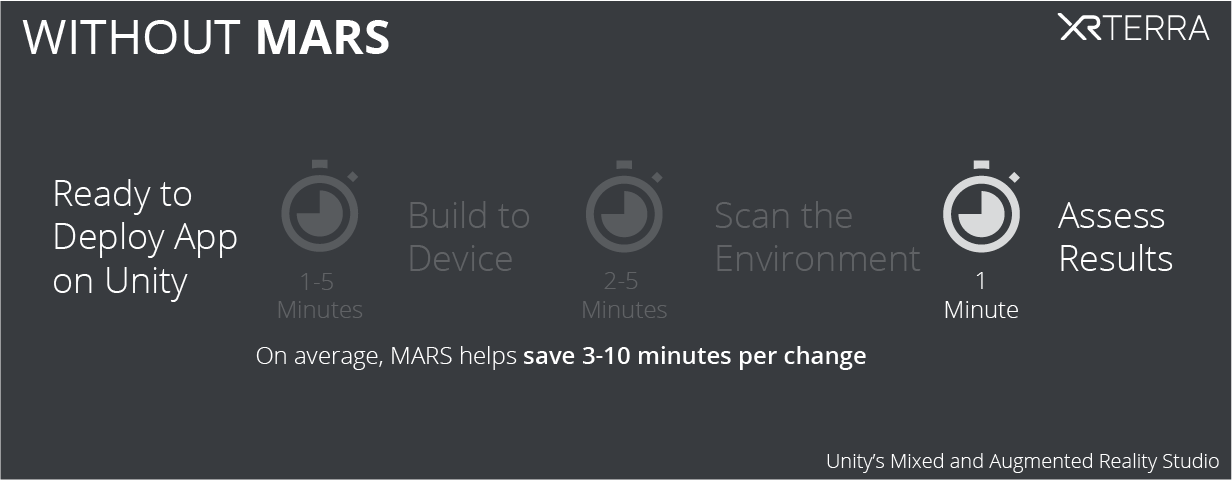

Without MARS, once the App is ready for testing in Unity the developer would need to deploy to the device which depending on your PC specs and whether the target device is android versus iOS can take anywhere from 1 – 5 minutes. This alone is a large loss of time for any developer.

Once the app is deployed to the device, the developer then needs to take their phone and scan for the appropriate surface they are testing. Chances are that the desk or table in the office is not the same as what the end-user will be using. For example, if the expected use case is a factory then the developer will not be able to test reliably at all. This step can take another 2-5 minutes depending on what is being tested.

Once this workflow is completed the developer can now see the effect of the changes they make in their code – for example, if a tweak to their code worked, or if the object appears correctly – which would take at least another minute of time.

Best case scenario, the developer can make 15 changes in an hour. Worst case scenario, they can only make 4 changes in that hour. On average, most developer’s would fall in the 7 to 10 changes per hour range.

Keep in mind that the above times are for the happy path where everything is going smoothly, and you aren’t trying to track down a weird bug with the Android Debugger Bridge or the low-level debugger on iOS.

Post-MARS Scenario:

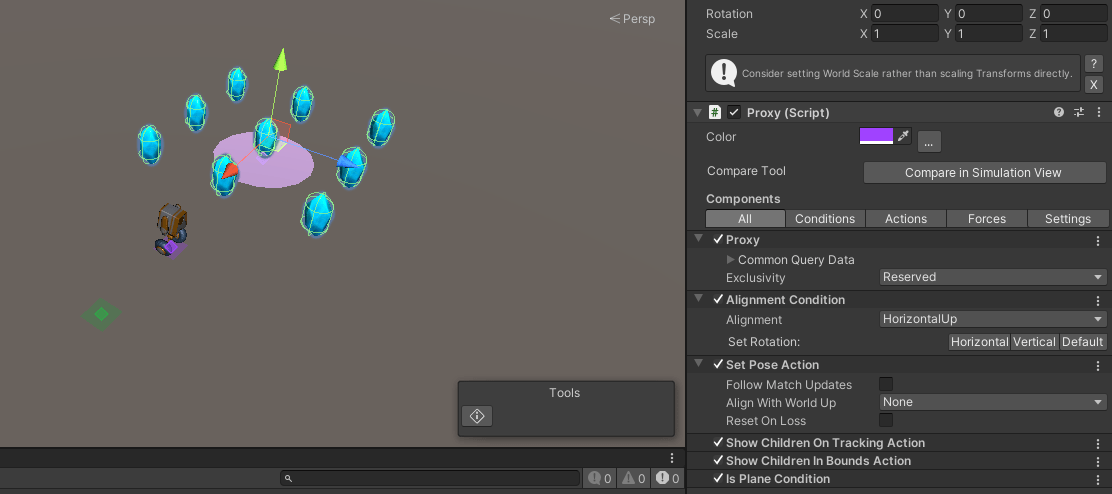

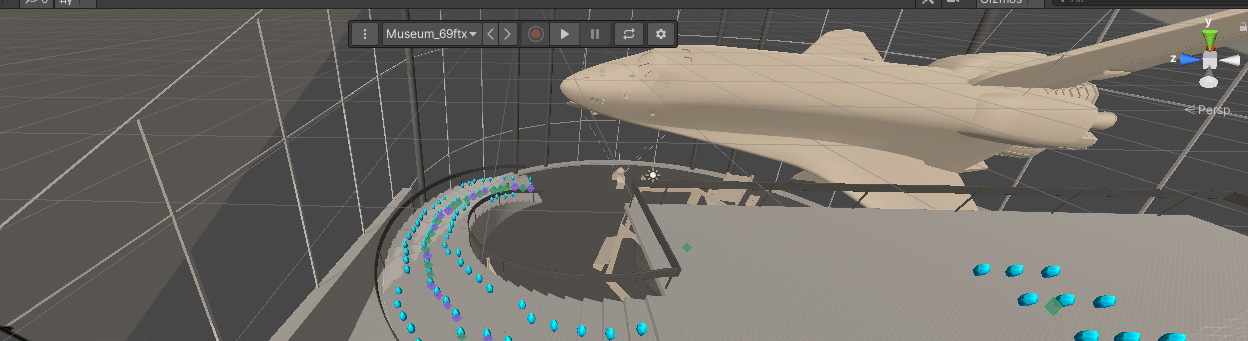

With MARS, developers can test by cutting out steps 2 and 3 by using the simulation environments. In the MARS sample, we have an energetic robot collecting blue crystals. The robot spawns at the first area scanned by the phone and the crystals spawn based on the type of surface the developer wants them to spawn on.